Ryan Wolcott Receives Best Student Paper Award at IROS 2014

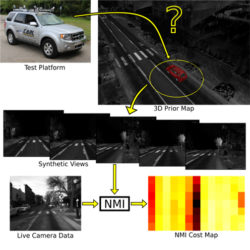

The paper presents a new visual localization algorithm that allows an autonomous car to precisely know where it is at with sub-lane precision.

Enlarge

Enlarge

Ryan Wolcott, a doctoral student in CSE, received a Best Student Paper Award at the 2014 IEEE/RSJ International Conferences on Intelligent Robot Systems. The award is given to the most outstanding paper authored primarily by, and presented by, a student. It is entitled, Visual Localization within LIDAR Maps for Automated Urban Driving, and was coauthored by his advisor Prof. Ryan Eustice.

Since self-driving cars have become reality, Wolcott delves into one of the most significant roadblocks to autonomous vehicles, which is the prohibitive cost of sensor suites necessary for localization.

Enlarge

Enlarge

Wolcott stated, “The paper presents a new visual localization algorithm that allows a car to precisely know where it is at with sub-lane precision. The proposed technique enables autonomous driving without having to rely on GPS, which is fragile for a number of reasons (multi-path, low-accuracy, GPS-denied conditions). The new algorithm allows for matching a forward-looking dash-cam image to a pre-built 3-dimensional LIDAR map of the environment. By generating thousands of synthetic views of the scene (similar to video game-like renderings of the world), we used normalized mutual information to evaluate these registration candidates. We compare our work against the state-of-the-art in LIDAR-based localization, showing that we can achieve a similar order of magnitude error rate with a sensor that is several orders of magnitude cheaper.”

The image above shows an overview of the researchers’ proposed visual localization system. They sought to localize a monocular camera with a 3D prior map (augmented with surface reflectivities) constructed from 3D LIDAR scanners.

Click here for a video summary of the paper.

MENU

MENU